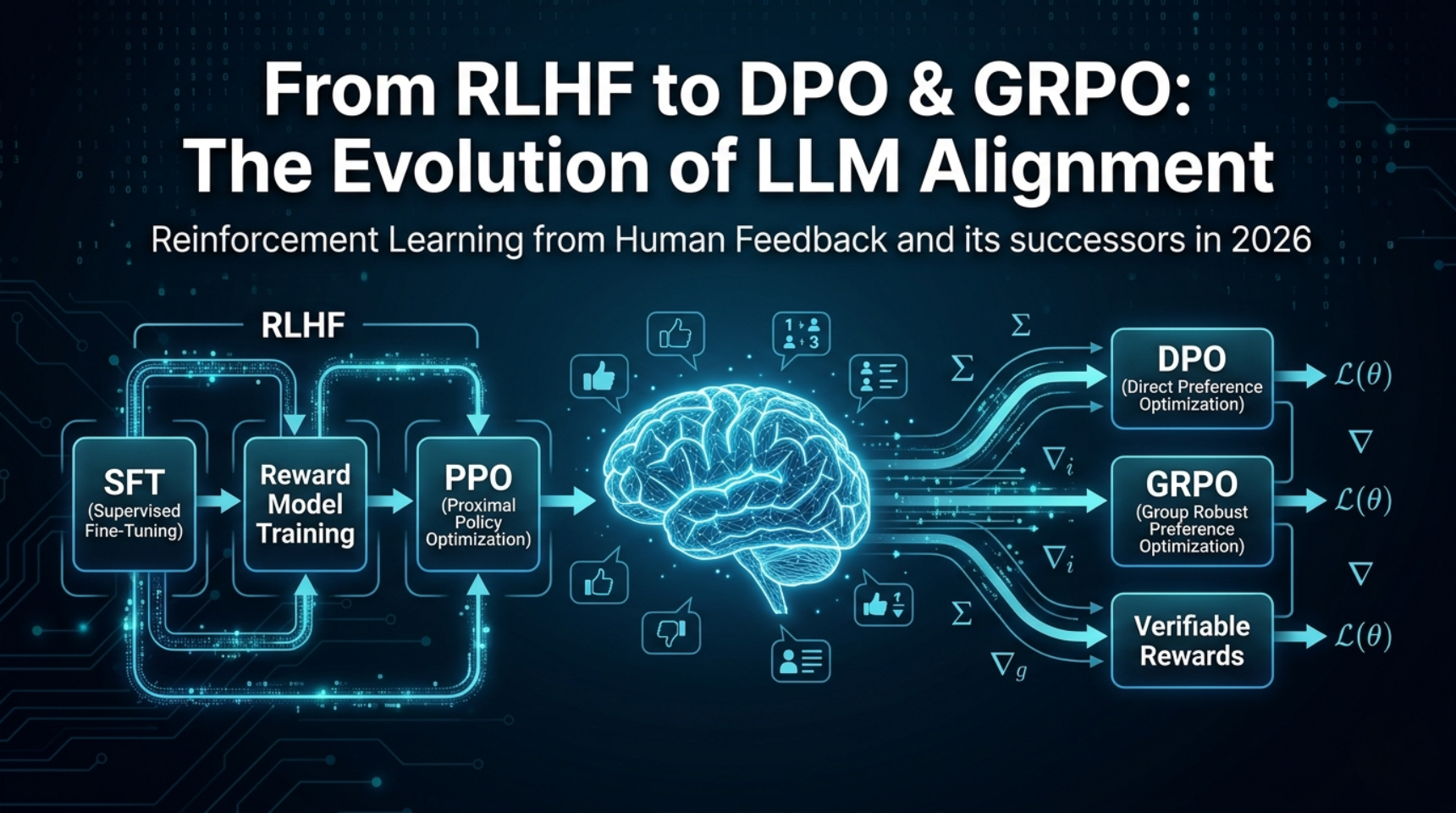

RLHF Explained: How Human Feedback Trains AI Models in 2026

Last updated: April 2026 Reinforcement Learning from Human Feedback (RLHF) is a 3-stage training pipeline that aligns large language models with human preferences: first supervised fine-tuning, then reward model training on ranked outputs, and finally policy optimization with PPO. As of 2026, RLHF remains the conceptual foundation of LLM alignment, but production systems increasingly replace … Read more