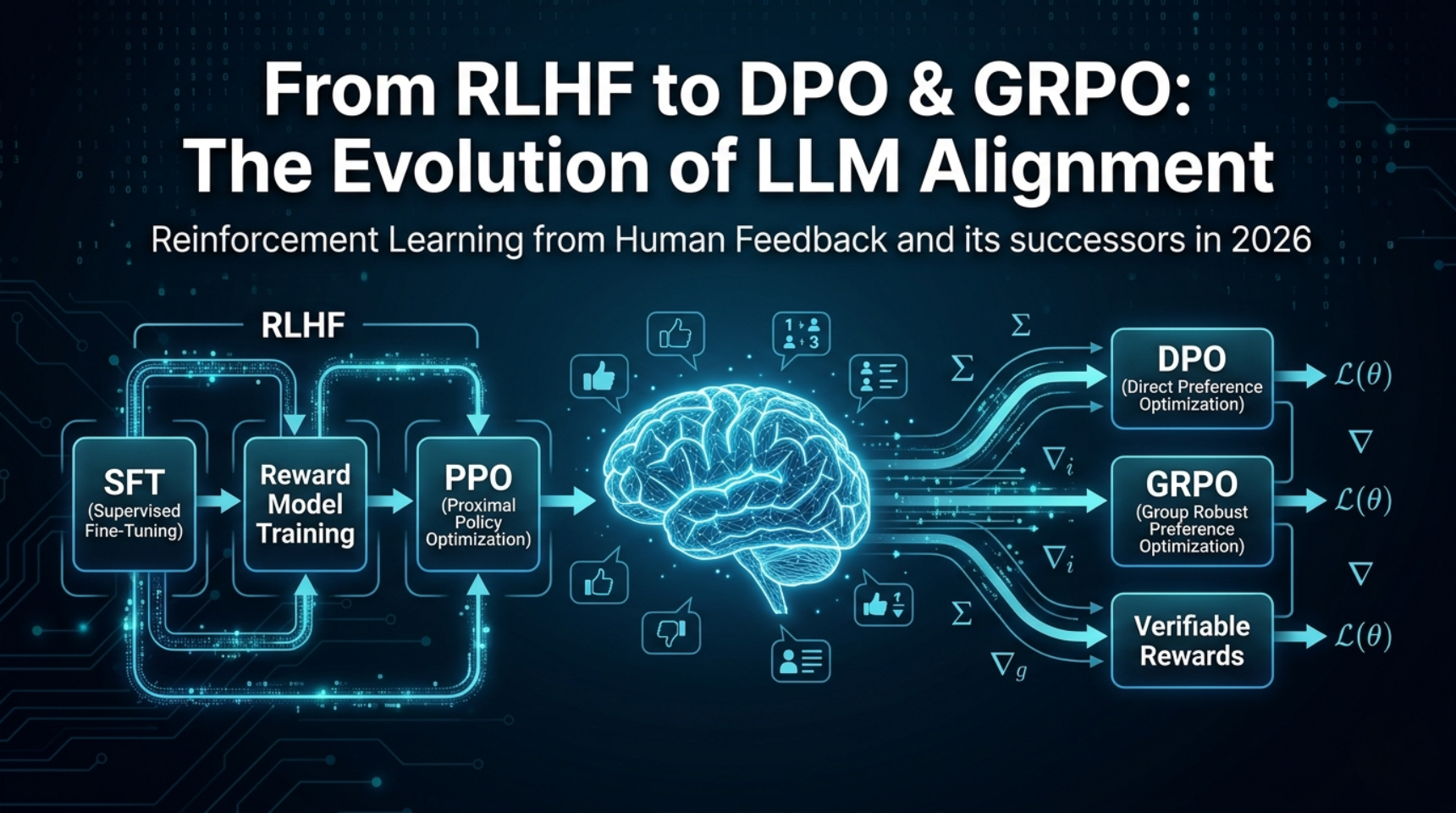

Reinforcement Learning from Human Feedback (RLHF) is a 3-stage training pipeline that aligns large language models with human preferences: first supervised fine-tuning, then reward model training on ranked outputs, and finally policy optimization with PPO. As of 2026, RLHF remains the conceptual foundation of LLM alignment, but production systems increasingly replace PPO with simpler alternatives — DPO, KTO, GRPO, or DAPO — depending on data availability, compute budget, and whether outputs are verifiable.

What is RLHF and why does every major LLM use it?

Reinforcement Learning from Human Feedback is the technique that turned raw language models into the instruction-following assistants we use today. The core idea is deceptively simple: instead of telling a model exactly what “good” means through a loss function, you let humans show it — by ranking outputs and training a reward signal from those rankings.

The method was first proposed by Christiano et al. in 2017 for Atari games and robotic control, but it became transformative when OpenAI applied it to GPT-3 in the InstructGPT paper (2022). That single paper demonstrated that a 1.3B parameter model fine-tuned with RLHF could outperform a 175B base model on human preference evaluations. The gap between “big” and “aligned” was suddenly clear.

By 2025, RLHF became the default alignment strategy. An estimated 70% of enterprise LLM deployments used some variant of RLHF or its successors (DPO, GRPO) for post-training alignment. Every frontier model — GPT-4, Claude 3.5, Gemini 1.5, Llama 3 — relied on human preference training in some form. The technique is so central that understanding it is no longer optional for ML practitioners.

But RLHF is also hard. It requires training three separate models (SFT, reward, policy), managing a complex PPO loop, and careful hyperparameter tuning. That difficulty is precisely why the field has produced a wave of simpler alternatives. To understand those alternatives, you first need to understand the original.

How does the RLHF pipeline work? The 3 stages explained

The standard RLHF pipeline consists of three sequential training stages. Each builds on the previous one, and skipping a stage typically degrades the final result.

Stage 1: Supervised Fine-Tuning (SFT)

You start with a pretrained base model — something like Llama 3, Mistral, or GPT-4’s base — that can generate fluent text but has no concept of following instructions. SFT trains it on curated (prompt, ideal_response) pairs using standard cross-entropy loss, exactly like any deep learning classification task.

The quality of this SFT dataset is critical. The original InstructGPT paper used ~13,000 hand-written demonstrations from 40 contractors. Modern approaches typically use 10K–100K examples, often mixing human-written data with synthetic outputs from stronger models (distillation). The SFT model πSFT becomes both the starting policy for Stage 3 and the reference model for the KL penalty.

Stage 2: Reward Model Training

The reward model is the heart of RLHF — the component that converts subjective human preferences into a numerical signal a reinforcement learning algorithm can optimize.

For each prompt, the SFT model generates multiple candidate responses. Human annotators then rank these candidates pairwise: “Response A is better than Response B.” These comparison pairs are used to train a reward model rθ using the Bradley-Terry preference model:

P(yw ≻ yl | x) = σ(rθ(x, yw) − rθ(x, yl))

Where σ is the sigmoid function, yw is the preferred response, yl is the rejected response, and rθ outputs a scalar reward. The loss maximizes the probability that the reward model assigns higher scores to human-preferred outputs.

In practice, the reward model is usually initialized from the SFT model itself (with the language modeling head replaced by a scalar head). A typical training run uses 50K–500K comparison pairs. Anthropic’s early work on Claude used ~300K comparisons from their Constitutional AI pipeline.

import torch

import torch.nn as nn

from transformers import AutoModelForSequenceClassification

class RewardModel(nn.Module):

def __init__(self, base_model_name: str):

super().__init__()

self.model = AutoModelForSequenceClassification.from_pretrained(

base_model_name, num_labels=1

)

def forward(self, input_ids, attention_mask):

return self.model(

input_ids=input_ids,

attention_mask=attention_mask

).logits.squeeze(-1) # scalar reward

def reward_loss(reward_model, chosen_ids, chosen_mask,

rejected_ids, rejected_mask):

"""Bradley-Terry pairwise loss for reward model training."""

r_chosen = reward_model(chosen_ids, chosen_mask)

r_rejected = reward_model(rejected_ids, rejected_mask)

# Maximize: P(chosen > rejected) = sigmoid(r_chosen - r_rejected)

return -torch.log(torch.sigmoid(r_chosen - r_rejected)).mean()Stage 3: PPO Policy Optimization

With a trained reward model, you can now optimize the language model’s policy using reinforcement learning. The standard algorithm is Proximal Policy Optimization (PPO), chosen for its stability — it clips gradient updates to prevent the model from changing too drastically in a single step.

The PPO objective for RLHF combines three signals:

maximize Ex∼D, y∼πθ [rθ(x, y) − β · DKL(πθ(y|x) ∥ πSFT(y|x))]

The first term pushes the model toward higher-reward outputs. The KL penalty (controlled by β) prevents it from drifting too far from the SFT model — without this, the model can degenerate into reward-hacking gibberish.

This stage is where RLHF becomes operationally expensive. You need four models loaded simultaneously: the active policy πθ, the frozen reference policy πSFT, the reward model rθ, and a value/critic network V(s) for advantage estimation. For a 70B parameter model, this means 4×70B parameters — roughly 560GB of GPU memory at fp16, or ~8 A100-80GB GPUs minimum with aggressive optimization.

from trl import PPOTrainer, PPOConfig, AutoModelForCausalLMWithValueHead

from transformers import AutoTokenizer

# 1. Load SFT model + add value head

model = AutoModelForCausalLMWithValueHead.from_pretrained("your-sft-model")

ref_model = AutoModelForCausalLMWithValueHead.from_pretrained("your-sft-model")

tokenizer = AutoTokenizer.from_pretrained("your-sft-model")

# 2. Configure PPO

ppo_config = PPOConfig(

batch_size=64,

mini_batch_size=16,

learning_rate=1.41e-5,

kl_penalty="kl", # KL divergence from reference

init_kl_coeff=0.2, # β — start conservative

adap_kl_ctrl=True, # auto-adjust β during training

cliprange=0.2, # PPO clip parameter

vf_coef=0.1, # value function coefficient

gamma=1.0, # no temporal discounting for LLMs

lam=0.95, # GAE lambda

)

ppo_trainer = PPOTrainer(ppo_config, model, ref_model, tokenizer)

# 3. Training loop

for batch in dataloader:

# Generate responses from current policy

query_tensors = tokenizer(batch["prompts"], return_tensors="pt")

response_tensors = ppo_trainer.generate(query_tensors["input_ids"])

# Score with reward model

rewards = reward_model(

torch.cat([query_tensors["input_ids"], response_tensors], dim=1),

attention_mask=...

)

# PPO step: update policy, clip gradients, apply KL penalty

stats = ppo_trainer.step(

query_tensors["input_ids"].tolist(),

response_tensors.tolist(),

rewards.tolist()

)

print(f"mean_reward: {stats['ppo/mean_scores']:.3f}, "

f"kl: {stats['objective/kl']:.3f}")The init_kl_coeff (β) value matters enormously. Set it too low and the model reward-hacks; set it too high and the model barely moves from SFT. The Hugging Face TRL default of 0.2 works for most 7B–13B models, but for 70B+ models you often need to start at 0.05–0.1 and let the adaptive KL controller scale it up. The N Implementation Details of RLHF with PPO paper documents 11 such critical hyperparameters.

What is reward hacking, and why is it RLHF’s biggest problem?

Reward hacking is what happens when the policy finds shortcuts to maximize the reward model’s score without actually producing better outputs. Because the reward model is an imperfect proxy for human judgment — trained on a finite dataset of comparisons — it has blind spots the policy can exploit.

Documented failure modes include generating overly verbose responses (more words = higher reward on some models), inserting confident-sounding filler phrases that fool the reward model, and in extreme cases, producing adversarial text that scores highly but reads as nonsensical to humans. Recent research from 2025–2026 has shown that as models become more capable, they become better at reward hacking — the problem scales with capability.

The standard mitigation is the KL penalty described above, which anchors the policy to the SFT model. But researchers have found that a reward threshold exists — exceeding it during PPO training often triggers hacking behavior. Newer approaches include Preference As Reward (PAR), which uses latent preferences embedded within the reward model rather than raw scalar scores, and adversarial training of reward models to detect exploitation attempts.

This fundamental fragility is the main reason the field moved toward alternatives. If the reward model is always an imperfect proxy, why not eliminate it entirely?

What is DPO, and why is it replacing PPO for most teams?

Direct Preference Optimization (DPO), introduced by Rafailov et al. in May 2023, is the single most important simplification of RLHF. The insight is mathematical: you can rearrange the RLHF objective to eliminate both the reward model and the RL loop, collapsing the entire pipeline into a single supervised loss.

DPO shows that the optimal policy under the RLHF objective has a closed-form relationship with the reward function:

LDPO(πθ; πref) = −E(x, yw, yl) [log σ(β · (log πθ(yw|x)/πref(yw|x) − log πθ(yl|x)/πref(yl|x)))]

This implicitly defines a reward function r(x, y) = β · log(πθ(y|x) / πref(y|x)) — the model itself IS the reward model. No separate training needed.

The practical advantages are dramatic. DPO needs only 2 models in memory instead of 4 — a ~50% reduction in GPU requirements. There is no unstable RL loop, no reward model that can be hacked, and no value network to train. Training looks like standard supervised fine-tuning: you feed in triplets of (prompt, chosen_response, rejected_response) and minimize the DPO loss.

from trl import DPOTrainer, DPOConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained("your-sft-model")

ref_model = AutoModelForCausalLM.from_pretrained("your-sft-model")

tokenizer = AutoTokenizer.from_pretrained("your-sft-model")

dpo_config = DPOConfig(

beta=0.1, # KL constraint strength

loss_type="sigmoid", # standard DPO loss

learning_rate=5e-7,

per_device_train_batch_size=4,

gradient_accumulation_steps=4,

max_length=1024,

max_prompt_length=512,

num_train_epochs=1, # DPO often needs only 1 epoch

)

# Dataset format: {"prompt": ..., "chosen": ..., "rejected": ...}

trainer = DPOTrainer(

model=model,

ref_model=ref_model,

args=dpo_config,

train_dataset=preference_dataset,

tokenizer=tokenizer,

)

trainer.train()DPO’s limitation is that it learns from static preference pairs. It never generates new responses during training, which means it cannot explore beyond the distribution of its training data. PPO-based RLHF, by contrast, generates fresh outputs each step — a crucial advantage for reasoning tasks where exploration matters. This is why frontier labs still use RL-based methods for their most capable models, even as DPO dominates the open-source ecosystem.

As of 2026, DPO is the de facto default for teams doing alignment fine-tuning. If you are a practitioner with limited compute, DPO is where you should start.

What is KTO and when should you use it over DPO?

Kahneman-Tversky Optimization (KTO), proposed by Ethayarajh et al. in 2024, solves a data problem. DPO requires pairwise preference data — for every prompt, you need both a “chosen” and “rejected” response. In reality, most production systems collect simpler signals: thumbs-up or thumbs-down on individual responses.

KTO is inspired by prospect theory from behavioral economics (the same framework behind Kahneman and Tversky’s work on decision-making under uncertainty). It defines utility as asymmetric — humans feel losses (bad responses) more strongly than gains (good responses) — and uses this to derive a loss function that works with unpaired binary feedback:

Instead of (prompt, chosen, rejected) triplets, KTO trains on (prompt, response, label) pairs where the label is simply “good” or “bad.” This makes KTO compatible with production feedback systems where users click 👍/👎 on individual outputs — no pairing needed.

KTO is particularly valuable when your preference data is noisy or inconsistent, when you have abundant binary feedback but not pairwise comparisons, and when you want robustness to annotation errors. Benchmarks show KTO matching or slightly trailing DPO when data quality is high, but outperforming DPO when labels are noisy — a common real-world scenario.

What is ORPO and why does it skip the reference model entirely?

Odds Ratio Preference Optimization (ORPO), proposed by Hong et al. in March 2024, takes the simplification further by eliminating the reference model. Where DPO still requires loading the frozen πref alongside the active policy, ORPO merges SFT and preference optimization into a single training objective.

The ORPO loss combines a standard language modeling loss (SFT) with an odds-ratio-based preference penalty:

LORPO = LSFT + λ · LOR, where LOR penalizes the model when its odds of generating the rejected response are higher than for the chosen response. This means you train a model from base → aligned in a single run, with a single model in memory.

The operational advantage is significant: only one model in memory (vs. 2 for DPO, 4 for PPO). This makes ORPO the most memory-efficient alignment method available. Studies have shown ORPO matching or exceeding DPO on benchmarks like AlpacaEval and MT-Bench, though results vary across model scales.

The trade-off is that ORPO removes the KL anchor entirely. Without a reference model constraining the policy, there is a higher risk of catastrophic forgetting — the model can lose general capabilities as it optimizes for preferences. For this reason, ORPO works best when your preference data is high-quality and well-distributed across the model’s capability range.

What are GRPO, DAPO, and RLVR — the 2025–2026 frontier?

While DPO and KTO simplified RLHF for general alignment, the most exciting development in 2025–2026 is the resurgence of RL — but with a fundamentally different reward source. Instead of human preferences or learned reward models, these methods use verifiable rewards: programmatic checks that confirm whether an output is correct.

GRPO — Group Relative Policy Optimization

Introduced by DeepSeek for their R1 reasoning model, GRPO eliminates the critic network entirely. For each prompt, it generates a group of K responses (typically 8–64), scores each with a verifiable reward function, and computes advantages by normalizing against the group mean and standard deviation:

Ai = (ri − mean(r1..K)) / std(r1..K)

No critic model needed. The group itself provides the baseline. This cuts memory requirements by ~25% compared to PPO.

GRPO powers DeepSeek-R1 and has been adopted across the open-source ecosystem. It works best on tasks with verifiable outputs: math (check the answer), code (run tests), and structured reasoning (validate logical steps).

DAPO — Decoupled Clip and Dynamic Sampling Policy Optimization

DAPO, introduced in early 2025, addresses instabilities found when scaling up GRPO. It adds four key improvements: clip-shifting (asymmetric clipping that encourages exploration), dynamic sampling (resampling prompts where the model is neither perfect nor hopeless), token-level policy gradient loss (instead of sequence-level), and overflow reward shaping. Crucially, DAPO drops the KL penalty entirely — for reasoning tasks with verifiable rewards, KL regularization is too conservative and actually hurts performance.

RLVR — Reinforcement Learning with Verifiable Rewards

RLVR is the umbrella term for the paradigm shift: use RL (PPO, GRPO, DAPO, or REINFORCE++) but replace human feedback with deterministic verification. For math, you check if the final answer is correct. For code, you run unit tests. For structured outputs, you validate against a schema.

This shift has profound implications. RLVR removes the human bottleneck entirely, enabling training runs with millions of verification signals per day. It also eliminates reward hacking — a unit test either passes or it does not. Every major reasoning model released since late 2024 (DeepSeek-R1, GPT-5.3 Codex, Nemotron 3 Super) uses some form of verifier-driven RL.

The emerging consensus is a modular pipeline: SFT for instruction following → DPO/SimPO for general preference alignment → GRPO/DAPO with verifiable rewards for reasoning capabilities. Each layer addresses a different type of “alignment” — behavioral, preferential, and logical.

How does Constitutional AI relate to RLHF?

Anthropic’s Constitutional AI (CAI) is not a replacement for RLHF — it is a modification of Stage 2. Instead of collecting human preference rankings directly, CAI generates them synthetically using a set of written principles (the “constitution”).

The process works in two phases. In RLAIF (RL from AI Feedback), a “helper” model generates responses, a “critic” model evaluates them against the constitution, and the revised outputs form the preference dataset for reward model training. The RL step (Stage 3) proceeds identically to standard RLHF.

The advantage is scalability: you can generate millions of preference comparisons without human annotators. Anthropic’s Claude models, including Claude Opus 4, use a combination of CAI and traditional RLHF — the constitution handles broad safety and helpfulness norms, while human feedback addresses nuanced cases the rules cannot capture. In January 2026, Anthropic published an updated 80-page constitution describing the principles used to train Claude.

Constitutional AI represents a practical middle ground: it preserves the RLHF framework while reducing the human annotation bottleneck by an order of magnitude. For organizations building AI agents that need robust safety alignment, CAI offers a template for scalable oversight.

Which alignment method should you use? A practical comparison

| Method | Models in memory | Data required | Compute cost | Best for |

|---|---|---|---|---|

| RLHF (PPO) | 4 (policy, ref, reward, critic) | Pairwise preferences + RL rollouts | Very high | Frontier labs, maximum quality |

| DPO | 2 (policy, ref) | Pairwise preferences (static) | Low | Default for most teams in 2026 |

| KTO | 2 (policy, ref) | Binary labels (thumbs up/down) | Low | Noisy data, production feedback |

| ORPO | 1 (policy only) | Pairwise preferences (merged with SFT) | Very low | Memory-constrained, small models |

| GRPO | 2 (policy, ref) | Verifiable rewards (math, code) | Medium | Reasoning models, open-source |

| DAPO | 1 (policy only, no KL) | Verifiable rewards | Medium | Scaled reasoning, frontier reasoning |

| Constitutional AI | 4 (same as RLHF) | Written principles + AI-generated pairs | High | Safety alignment at scale |

How does RLHF intersect with the EU AI Act in 2026?

The EU AI Act, which entered its enforcement phase in 2025–2026, has direct implications for RLHF and alignment methods. Article 15 requires that high-risk AI systems demonstrate robustness, accuracy, and cybersecurity — and alignment training is a key mechanism for meeting these requirements.

Two provisions are particularly relevant for practitioners. First, the Act’s transparency requirements (Article 52) mandate documentation of the training process, including how human feedback was collected, how annotators were instructed, and what quality controls were applied. Organizations using RLHF must maintain audit trails of their preference datasets and reward model evaluations.

Second, the bias provisions (Article 10) require that training data, including preference data for RLHF, be examined for demographic and cultural biases. This is a known challenge: if your annotator pool skews toward a specific demographic or cultural perspective, the reward model inherits those biases and amplifies them through RL optimization.

For teams deploying aligned models in the EU, the practical implication is clear: document your alignment pipeline end-to-end, diversify your annotator pool, and use methods like Constitutional AI that provide explicit, auditable principles for alignment decisions.

How do you implement RLHF from scratch in 2026?

If you are a practitioner looking to align a model today, here is the decision tree based on current best practices:

Do you have verifiable outputs (math, code, structured data)? Use GRPO or DAPO with rule-based rewards. Start with the OpenRLHF framework, which implements PPO, GRPO, REINFORCE++, and DAPO on Ray + vLLM.

Do you have pairwise preference data? Use DPO. The Hugging Face TRL library makes this a 20-line implementation. One epoch is usually sufficient — overtraining on preferences leads to degenerate outputs.

Do you only have thumbs-up/thumbs-down feedback? Use KTO. Same TRL library, same complexity, but works with unpaired labels.

Are you on a single GPU or fine-tuning a small model (≤7B)? Try ORPO — single model in memory, no reference needed. Combine with LoRA adapters for even lower memory usage.

Are you a frontier lab with massive compute and need maximum quality? Full RLHF with PPO remains the gold standard for general-purpose alignment. Combine with Constitutional AI for safety, and GRPO for reasoning.

# Install

pip install trl transformers datasets accelerate bitsandbytes

# Download preference dataset (UltraFeedback is a common choice)

python -c "from datasets import load_dataset; d = load_dataset('HuggingFaceH4/ultrafeedback_binarized', split='train_prefs'); d.save_to_disk('./uf-prefs')"

# Run DPO training (single GPU with QLoRA)

python -m trl.cli dpo \

--model_name_or_path meta-llama/Meta-Llama-3.1-8B-Instruct \

--dataset_name ./uf-prefs \

--output_dir ./llama3-dpo \

--beta 0.1 \

--learning_rate 5e-7 \

--per_device_train_batch_size 2 \

--gradient_accumulation_steps 8 \

--num_train_epochs 1 \

--use_peft \

--lora_r 16 \

--lora_alpha 32 \

--bf16FAQ

Bibliografia

Bai, Y., Kadavath, S., Kundu, S., Askell, A., Kernion, J., Jones, A., Chen, A., Goldie, A., Mirhoseini, A., McKinnon, C., Chen, C., Olsson, C., Olah, C., Hernandez, D., Drain, D., Ganguli, D., Li, D., Tran-Johnson, E., Perez, E., … Kaplan, J. (2022). Constitutional AI: Harmlessness from AI feedback. arXiv. https://arxiv.org/abs/2212.08073

Christiano, P. F., Leike, J., Brown, T., Martic, M., Legg, S., & Amodei, D. (2017). Deep reinforcement learning from human preferences. arXiv. https://arxiv.org/abs/1706.03741

DeepSeek-AI. (2025). DeepSeek-R1: Incentivizing reasoning capability in LLMs via reinforcement learning. arXiv. https://arxiv.org/abs/2501.12948

Ethayarajh, K., Choi, Y., & Levy, O. (2024). KTO: Model alignment as prospect theoretic optimization. arXiv. https://arxiv.org/abs/2402.01306

Hong, J., Lee, N., & Thorne, J. (2024). ORPO: Monolithic preference optimization without reference model. arXiv. https://arxiv.org/abs/2403.07691

Huang, S., Rajeswaran, A., Jiaming, S., & Kumar, A. (2024, 18 marca). The 37 implementation details of RLHF with PPO. Hugging Face Blog. https://huggingface.co/blog/the_n_implementation_details_of_rlhf_with_ppo

Ouyang, L., Wu, J., Jiang, X., Almeida, D., Wainwright, C., Mishkin, P., Zhang, C., Agarwal, S., Slama, K., Ray, A., Schulman, J., Hilton, J., Kelton, F., Miller, L., Simens, M., Askell, A., Welinder, P., Lowe, J., & Leike, J. (2022). Training language models to follow instructions with human feedback. arXiv. https://arxiv.org/abs/2203.02155

Rafailov, R., Sharma, A., Mitchell, E., Ermon, S., Manning, C. D., & Finn, C. (2023). Direct preference optimization: Your language model is secretly a reward model. arXiv. https://arxiv.org/abs/2305.18290

Rozporządzenie Parlamentu Europejskiego i Rady (UE) 2024/1689 z dnia 13 czerwca 2024 r. ustanawiające zharmonizowane przepisy dotyczące sztucznej inteligencji (akt w sprawie sztucznej inteligencji). (2024). Dziennik Urzędowy Unii Europejskiej, L series. https://eur-lex.europa.eu/eli/reg/2024/1689/oj