Artificial intelligence (AI) is the branch of computer science focused on building systems that perform tasks associated with human intelligence — learning, reasoning, planning, perception, and communication. Modern AI spans everything from rule-based expert systems and classical machine learning to deep neural networks and large language models, and it is governed by an evolving regulatory landscape including the EU AI Act and the NIST AI Risk Management Framework.

Defining Artificial Intelligence

Artificial intelligence (AI) is a field of computer science dedicated to creating systems capable of performing tasks we typically associate with human cognition: learning from experience, reasoning through problems, planning ahead, perceiving the environment, and communicating in natural language.

Institutional definitions emphasize the functional nature of AI — the ability of technical systems to receive data from their environment, process it, and act in pursuit of a specific goal. The European Parliament frames AI through the lens of “human-like” capabilities (reasoning, learning, planning, creativity) and a perception–decision–action loop (European Parliament, 2020).

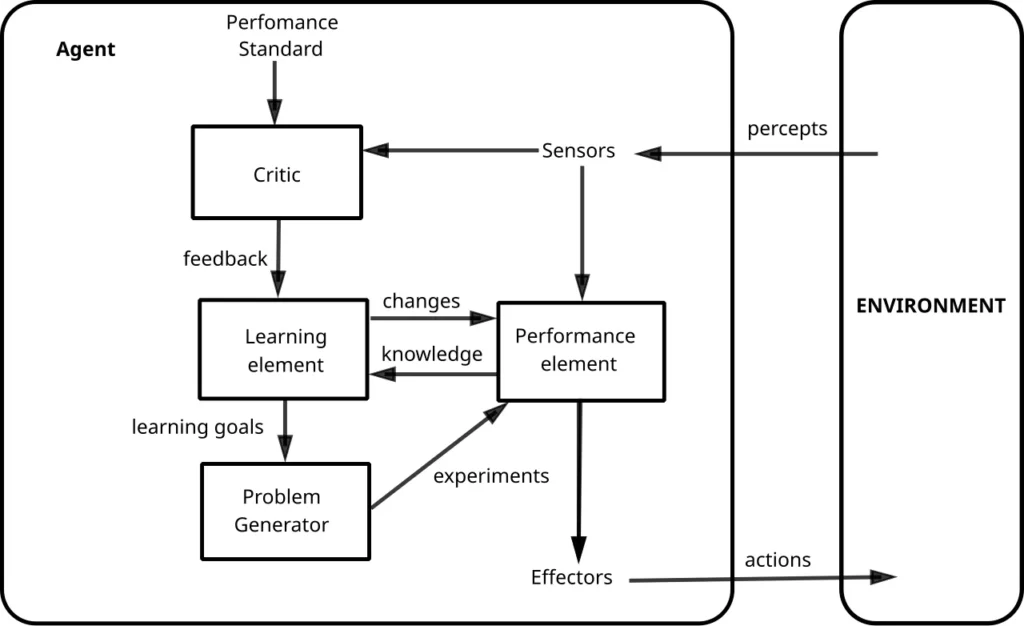

In academic terms, AI is often understood as the study of intelligent agents — programs or robots that receive percepts, operate within an environment, and maximize a performance measure. This framing accommodates everything from handcrafted rule systems and classical machine learning to deep reinforcement learning. Russell and Norvig (2021) made the “rational agent” the unifying theme of their landmark textbook, Artificial Intelligence: A Modern Approach.

Core Distinctions: AI vs. ML vs. DL

AI is the umbrella term for methods and systems that exhibit intelligent behavior.

Machine Learning (ML) is a subset of AI — methods that learn patterns from data rather than following hard-coded rules.

Deep Learning (DL) is a subset of ML built on deep neural networks with multiple layers of abstraction.

In philosophical literature, notably the Stanford Encyclopedia of Philosophy, the definition of AI encompasses both the history and philosophy of the field, as well as ongoing debates about the boundaries of the concept (Bringsjord & Govindarajulu, 2024).

Two Useful Lenses on AI

- AI as a research and technology field (computer science + cognitive science + logic + neuroscience): we build systems that are “intelligent” in a functional sense — they solve problems, recognize patterns, and generate useful outputs.

- AI as intelligent agents: an agent receives stimuli through sensors, updates its internal state (memory), and selects an action that maximizes a utility function. This formalism unifies practice from expert rules through ML to planning and reinforcement learning (Russell & Norvig, 2021).

How Does AI Work? Conceptual Framework

The Agent Loop

At its core, AI can be described as an information-processing loop:

In the agent-based view: perception → state update → action selection (utility maximization) → action in the environment. Russell and Norvig (2021, ch. 2) illustrate this with reflex agents, goal-based agents, utility-based agents, and learning agents.Illustration: “Learning Agent — Agent Loop Variant.” Source: IntelligentAgent-Learning.svg, Wikimedia Commons, author Pduive23, based on Russell & Norvig — CC0 / Public Domain.

The Mechanics in 7 Steps

- Problem & success metric. Example: spam detection; metrics: F1, recall ≥ 0.90 (Pedregosa et al., 2011).

- Data. Labeled examples; train / validation / test split.

- Model. From logistic regression and decision trees to deep architectures (CNNs, Transformers).

- Training. Loss minimization (e.g., log-loss), optimization (SGD, Adam).

- Evaluation. Accuracy, precision / recall, F1, ROC AUC, cross-validation (Pedregosa et al., 2011).

- Deployment. API or edge serving, model registry, drift monitoring. The NIST AI RMF recommends risk management across the entire system lifecycle (National Institute of Standards and Technology [NIST], 2023).

- Risk management. Privacy, security, compliance — in the EU the AI Act and DPIA requirements set the standard (European Parliament, 2024; UODO, n.d.).

Symbolic vs. Learning-Based: From Logic to Neurosymbolic AI

Symbolic approaches (logic, rule systems, formal reasoning) excel at representing explicit knowledge and enabling controlled inference, while learning-based methods (ML/DL) are powerful at fitting patterns from data. Contemporary research increasingly points toward neurosymbolic integration: combining the interpretability and expert-knowledge advantages of symbolic methods with the predictive quality of neural networks (Garcez & Lamb, 2023).

Classification of AI Applications

Cross-Sector Map

- Public sector & education: 24/7 QA/FAQ assistants, improved information accessibility, reduced response times. EPRS reports discuss both opportunities and risks (European Parliament, 2020).

- Healthcare: medical image classification (X-ray, MRI), triage systems — in the EU, diagnostics is classified as a high-risk application (European Parliament, 2024).

- Manufacturing & IoT: predictive maintenance, energy optimization; edge AI reduces latency at the cost of more complex maintenance.

- Digital business: recommendation engines, credit scoring, virtual assistants; engineering best practices emphasize the distinctions between AI/ML/DL and rigorous quality metrics (Pedregosa et al., 2011).

Mini-Case A: School FAQ Bot

- Data: ~4,000 Q&A pairs from school regulations and announcements.

- Model: RAG (Retrieval-Augmented Generation) + rule layer (neurosymbolic variant).

- Pilot results: ~68% of inquiries resolved without human contact; CSAT 4.3/5; response time reduced by 80%. (Illustrative educational example.)

Mini-Case B: E-Commerce Recommendations

- Model: Learning-to-Rank (handcrafted features + product embeddings).

- A/B test (N ≈ 50,000 sessions): CTR +12%, average cart value +6%. (Illustrative educational example.)

Mini-Case C: Factory Visual Quality Control

- Model: CNN (segmentation), alarm threshold: recall ≥ 0.95.

- Result: recall 0.96, precision 0.88; false alarms mitigated with business rules. (Illustrative educational example.)

Mini-Case D: Health — Symptom Description Classifier

- Model: Transformer-based text classifier + safety filter.

- Result: AUC 0.89; limitations: no clinical decisions without a physician, strict legal and ethical requirements apply (Müller, 2024; European Parliament, 2024).

The AI System Lifecycle

A simplified production pipeline:

Data sources (logs, images, text) → ETL / feature store → ML/DL training → validation (metrics) → model registry → deployment (API/edge) → monitoring (drift, security) → data reconnection & retraining.

Both survey literature and EPRS reports emphasize the continuous, feedback-driven nature of this lifecycle (European Parliament, 2020; NIST, 2023).

Metrics, Evaluation, and Common Pitfalls

Key Classification Metrics

- Accuracy

- Precision / Recall / F1-score

- ROC AUC and PR AUC

- scikit-learn’s

classification_reportfor quick summaries (Pedregosa et al., 2011)

Caution with imbalanced classes: accuracy alone can be misleading — prefer F1, PR AUC, and decision-threshold analysis.

Typical Mistakes

- Confusing AI with ML/DL → projects launched without clear objectives or success metrics.

- Overfitting → stellar results on paper, poor generalization in production.

- Data leakage → features inadvertently “peek” at the target label.

- Hallucinations (LLMs) → fabricated facts; mitigated through RAG and citation mechanisms.

- Security threats: prompt injection, data poisoning, model stealing (NIST, 2023).

Regulation and Ethics

EU Landscape

The AI Act is the world’s first comprehensive regulation of artificial intelligence, built on a risk-based approach (e.g., high-risk systems face stricter requirements). Alongside its supporting ecosystem of guidelines, it strengthens both safety standards and citizens’ rights (European Parliament, 2024).

A Data Protection Impact Assessment (DPIA) is required whenever processing is likely to pose a high risk to individuals’ rights and freedoms — particularly when new technologies or automated profiling are involved. Practical checklists are available from the Polish supervisory authority (UODO) and GDPR.eu (UODO, n.d.; GDPR.eu, n.d.).

Risk Management Frameworks

The NIST AI RMF (USA) is a voluntary, widely adopted standard organized around four functions — GOVERN, MAP, MEASURE, MANAGE — applied throughout the system lifecycle. It helps organizations embed trustworthiness requirements (safety, reliability, privacy, fairness) into AI design and operations (NIST, 2023).

Ethics and Philosophy of AI

The Stanford Encyclopedia of Philosophy surveys ethical issues including manipulation, dignity, autonomy, accountability for AI-driven decisions, and the design of artifacts that simulate intelligence. This philosophical backdrop helps explain the legal and societal expectations placed on AI systems (Müller, 2024).

Getting Started: Checklist & Hands-On Project

Starter Checklist (for Students, Juniors, and Self-Learners)

- Define the problem and metrics (e.g., F1 ≥ 0.90; spam recall ≥ 0.92).

- Collect data and prepare a data card (provenance, rights, potential bias).

- Split the dataset into train / validation / test (e.g., 70/15/15, stratified).

- Start with a baseline (logistic regression, decision tree) — only then consider deep learning.

- Validate and log experiments (e.g., MLflow, Weights & Biases).

- Use hard negatives to improve recall / precision.

- Deploy via a simple endpoint (FastAPI / Flask) with configurable thresholds.

- Monitor and manage risks: data drift, audit logs, PII policy compliant with GDPR / DPIA (NIST, 2023; UODO, n.d.).

Hands-On Snippet: SMS Spam Classifier (Python)

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.linear_model import LogisticRegression

from sklearn.pipeline import Pipeline

from sklearn.metrics import classification_report

# Load data — columns: text, label (spam / ham)

df = pd.read_csv("sms.csv")

X_train, X_test, y_train, y_test = train_test_split(

df["text"], df["label"],

test_size=0.2,

stratify=df["label"],

random_state=42

)

pipe = Pipeline([

("tfidf", TfidfVectorizer(min_df=3, ngram_range=(1, 2))),

("clf", LogisticRegression(max_iter=200))

])

pipe.fit(X_train, y_train)

y_pred = pipe.predict(X_test)

print(classification_report(y_test, y_pred))Full metric documentation: scikit-learn — Model evaluation.

AI vs. ML vs. DL — Quick Comparison

| Property | AI | ML | DL |

|---|---|---|---|

| Scope | Umbrella of intelligent methods | Subset of AI | Subset of ML |

| Goal | Intelligent behavior | Learning from data | Learning via deep networks |

| Data needs | Varied (incl. symbolic knowledge) | Required | Typically large datasets |

| Examples | Planning, reasoning, RL | SVM, decision trees, regression | CNN, RNN, Transformer |

| Strengths | Conceptual flexibility | Efficient with smaller data | State-of-the-art on complex tasks |

| Weaknesses | Broad concept | Requires feature engineering | Interpretability, compute cost |

Definitional basis: Bringsjord & Govindarajulu (2024); agent framework: Russell & Norvig (2021).

Open Debates and Controversies

- Transparency vs. predictive quality: deep learning achieves high accuracy at the cost of interpretability; neurosymbolic methods seek a middle ground (Garcez & Lamb, 2023).

- Accountability and agency: who bears responsibility for AI-driven decisions? (Müller, 2024).

- Labor market, democracy, and security risks: EPRS highlights both systemic opportunities and dangers (European Parliament, 2020).

- Standardization of best practices: the NIST AI RMF has become the de facto reference for AI risk management outside the EU (NIST, 2023).

Frequently Asked Questions

1. What is the difference between AI, ML, and DL?

AI is the umbrella term for methods that produce intelligent behavior. ML is a subset of AI — algorithms that learn from data. DL is a subset of ML that relies on deep neural networks with many layers of abstraction (Bringsjord & Govindarajulu, 2024).

2. What are the main types of AI?

The most common distinction is between Narrow AI (designed for specific tasks, such as image classification or language translation) and Artificial General Intelligence (AGI) — a hypothetical system with human-like cognitive flexibility. The EU also recognizes “general-purpose AI” in its regulatory framework (European Parliament, 2024).

3. Will AI replace humans?

AI is transforming the structure of work: it automates routine tasks and augments decision-making. Demand for hybrid skill sets is growing, and the debate — both ethical and economic — is far from settled (European Parliament, 2020).

4. How do you measure how good an AI model is?

It depends on the task. For classification: precision, recall, F1, and ROC AUC. For regression: MAE, MSE, R². For generative models: BLEU, ROUGE, and expert evaluation (Pedregosa et al., 2011).

5. Cloud or edge deployment?

Edge deployment reduces latency and data-transfer costs but makes training and maintenance harder. Cloud offers scalability and centralized management. Many production systems use a hybrid approach.

6. Is AI safe and legal?

That depends on the design. Privacy mechanisms, transparency measures, and risk assessments are required. In the EU, the AI Act applies, and a DPIA is mandatory for high-risk processing (European Parliament, 2024; UODO, n.d.).

7. Where can I find reliable knowledge about AI?

Start with established textbooks (Russell & Norvig, 2021), the Stanford Encyclopedia of Philosophy, EPRS reports, tool documentation (scikit-learn), and standards frameworks (NIST AI RMF Playbook).

8. Do I always need deep learning?

No. A baseline using classical methods often outperforms deep learning at small scale or for straightforward objectives. Always establish a reference point before reaching for complex architectures (Pedregosa et al., 2011).

References

Almeida, T. A., Gómez Hidalgo, J. M., & Yamakami, A. (2011). Contributions to the study of SMS spam filtering: New collection and results. Proceedings of the 2011 ACM Symposium on Document Engineering, 259–262. https://archive.ics.uci.edu/dataset/228/sms+spam+collection

Bringsjord, S., & Govindarajulu, N. S. (2024). Artificial intelligence. In E. N. Zalta & U. Nodelman (Eds.), Stanford encyclopedia of philosophy (Winter 2024 ed.). Stanford University. https://plato.stanford.edu/entries/artificial-intelligence/

European Parliament. (2020, September 4). What is artificial intelligence and how is it used? https://www.europarl.europa.eu/topics/en/article/20200827STO85804/what-is-artificial-intelligence-and-how-is-it-used

European Parliament. (2024, March 13). EU AI Act: First regulation on artificial intelligence. https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence

European Parliamentary Research Service. (2021). Artificial intelligence act (Briefing PE 698.792). European Parliament. https://www.europarl.europa.eu/thinktank/en/document/EPRS_BRI(2021)698792

Garcez, A. d’A., & Lamb, L. C. (2023). Neurosymbolic AI: The 3rd wave. Artificial Intelligence Review, 56, 12387–12406. https://doi.org/10.1007/s10462-023-10448-w

GDPR.eu. (n.d.). Art. 35 GDPR — Data protection impact assessment. https://gdpr.eu/article-35-impact-assessment/

Müller, V. C. (2024). Ethics of artificial intelligence and robotics. In E. N. Zalta & U. Nodelman (Eds.), Stanford encyclopedia of philosophy (Fall 2024 ed.). Stanford University. https://plato.stanford.edu/entries/ethics-ai/

National Institute of Standards and Technology. (2023). Artificial intelligence risk management framework (AI RMF 1.0) (NIST AI 100-1). U.S. Department of Commerce. https://www.nist.gov/artificial-intelligence/executive-order-safe-secure-and-trustworthy-artificial-intelligence

National Institute of Standards and Technology. (2023). AI RMF playbook. https://airc.nist.gov/AI_RMF_Knowledge_Base/Playbook

Pedregosa, F., Varoquaux, G., Gramfort, A., Michel, V., Thirion, B., Grisel, O., Blondel, M., Prettenhofer, P., Weiss, R., Dubourg, V., Vanderplas, J., Passos, A., Cournapeau, D., Brucher, M., Perrot, M., & Duchesnay, É. (2011). Scikit-learn: Machine learning in Python. Journal of Machine Learning Research, 12, 2825–2830. https://scikit-learn.org/stable/modules/model_evaluation.html

Russell, S., & Norvig, P. (2021). Artificial intelligence: A modern approach (4th ed.). Pearson. https://aima.cs.berkeley.edu/

Urząd Ochrony Danych Osobowych [UODO]. (n.d.). Ocena skutków dla ochrony danych (DPIA) — obowiązki administratora na gruncie art. 35 RODO. https://uodo.gov.pl/pl/223/812

1 thought on “What Is Artificial Intelligence? A Complete 2025 Guide”